The challenge is that the 3PL warehouse needs to send the company shipment information. Items shipped, quantities shipped, and shipment tracking numbers. The company needs to update their orders in Dynamics GP so that they can generate invoices.

The 3PL uses a SaaS service that can provide this shipment information, but that SaaS service can only call a web service.

And this small business doesn't have a web service. Or internal developers. Or an internal IT staff.

What can we do to help this company?

Do we try to help them used the outdated and problematic native Dynamics GP Web Services, and see if we run into problems with authentication, SOAP, limited endpoints, and fixed functionality? Risky, with an uncertain outcome, and difficult to estimate the effort and cost.

Do we propose that they develop a custom modern web service that will be highly flexible and fast? We can create a pretty accurate estimate of the effort for this project, but it is expensive for the customer, feels like overkill for a single endpoint, and will require a developer to maintain the code going forward.

Do we consider something like Azure Functions, which eliminates the need for infrastructure and developing a full web service, but still requires a developer?

Or is there another option?

What about Microsoft Flow?

No, really. Not joking.

Can Microsoft Flow address the challenge of building a custom, modern, web service that will allow the customer to receive the shipment data from the 3PL's SaaS service?

Let's find out.

Here's a video presentation discussing Flow web services and walking through the creation of the sample Flow:

If we break down the project, there are a few general requirements that we need to address:

1. Web service - We need a web service endpoint that the 3PL can call

2. Data storage - We need to store the incoming data, at least temporarily or for archiving or logging

3. ERP import - We need to eventually get the shipment data into the ERP system

Consider that these 3 items do not have to be accomplished with a single solution.

Items 1 and 2, which have traditionally been challenging to accomplish with Dynamics GP. (an entirely different conversation) One challenge with ERP web services is that they have defined endpoints and defined payloads that interact directly with the ERP system. What if the 3PL can only send data in a certain payload format? What if the 3PL can only make a single web service call per shipment, whereas the ERP system requires multiple calls to receive data for each shipment? What if the customer doesn't want to expose their ERP system directly on the internet? Let's just say there are complications and situations where the native ERP web service API isn't ideal for a publicly exposed web service.

But, fortunately, in the Dynamics GP world, there are several options and tools to handle item 3. SQL Server stored procedures, eConnect, and integration tools are widely used in the Dynamics GP world to handle data imports.

So, assuming item 3 is easy to handle, we really need to address items 1 and 2.

And Microsoft Flow can actually handle both of those with relative ease.

Let's start by creating a new flow with an HTTP Request trigger.

And in our scenario, the 3PL has provided us with a sample payload for the order shipment data.

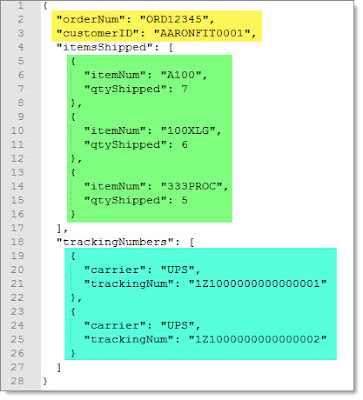

Notice that the JSON payload has some header values, then an array of items shipped, followed by an array of tracking numbers.

So we can just paste that into the HTTP request payload window.

Click on Done, and the JSON Schema will be automatically generated for us. This is AMAZING.

Now that we have our HTTP Request trigger setup, let's add an action.

Because the 3PL shipment payload can contain multiple items and multiple tracking numbers, we'll need an Apply To Each step, which can loop through an array in the JSON.

As soon as the Apply to Each is added, Flow detects the arrays in our JSON schema, and automatically provides us with the option to loop on itemsShipped and trackingNumbers.

Have you noticed that we haven't written a line of code yet?

And that Flow is doing all of this work to figure out what we want to do and provide what need to do it?

Is your brain melting yet? Mine was when I first saw this.

Let's choose the itemsShipped object first and then add a new SQL Server action.

Let's choose an Insert Row action to store the items shipped in a database table.

To prepare for this, I have created a Azure SQL Database. But you can also use an on-prem SQL Server with the On-premises Data Gateway.

In my FlowStaging database, I have created a few tables to store the shipment data from the 3PL.

cstbShipments will store the item shipments for the orders, and cstbTrackingNumbers will store the order tracking numbers.

Once we setup a connection to this Azure SQL Database in Flow, it displays the available tables.

Once we choose the SQL table, flow displays the fields available in the table and presents us with a list of values from the JSON schema that we can insert into the table.

With a few clicks, we can map the JSON fields to the SQL table fields for the insert.

With that step complete, let's click on Save to save the new Flow.

After the Flow is saved, a URL is automatically generated for our Flow that will serve as our public HTTP endpoint!

The URL looks something like this:

https://prod-24.westus.logic.azure.com:443/workflows/ffeeaa54b67574311bcf1b962feefe9d5/triggers/manual/paths/invoke?api-version=2016-06-01&sp=%2Ftriggers%2Fmanual%2Frun&sv=1.0&sig=Z9VchRZv-gVInSYmZDx1rBSVmoqvOUKH_5mZVjT5f99

So with just a handful of clicks, and ZERO CODE, we now have a public web service endpoint that can receive a JSON payload, parse the data, and save the records to a SQL Server database.

If we paste the URL into a browser, we can verify it works.

And if we launch Postman, paste in the URL, paste in a sample JSON payload, and select application/json data type...

...we can test our new Flow web service.

After submitting the POST request using Postman, we receive a prompt 202 Accepted response.

And if we then query the SQL database tables, we now see the item data.

So the Flow has saved the data in the cstbShipments table, but we aren't yet saving the tracking data. Let's update the Flow to take care of that.

We could add a new Apply to Each loop below the existing one, to produce a sequential step to insert the records, or we could get Extra Fancy and insert a parallel branch to handle the tracking numbers.

And with a few more clicks, we now have a loop to insert tracking numbers into the SQL database table.

After clicking Save, we can use Postman to test the Flow again.

And now we have data in both tables.

From start to finish, this entire flow can be created in minutes. With just a sample JSON payload and a SQL database, you can create a public HTTP web service endpoint that can receive data and store it in a database.

Zero code. Just clicks of a mouse.

For customers with limited resources and limited budget, this is a very valuable offer.

But with this convenience come a few caveats. Here are a few things to consider:

1. The Flow HTTP Request action appears to only accept JSON, not XML

2. Flow may not work well with complex JSON payloads, such as those with nested arrays

3. Flow may not work with highly variable JSON structures. (if a value or array sometimes exists, and sometimes doesn't)

4. The performance of the Flow SQL data connector is not great. My testing shows that it takes about 1 second per insert, so inserting 10 record could take a full 10 seconds. Adjusting the concurrency can improve this dramatically, but based on my testing, the minimum response time is 1-2 seconds when using a SQL Server connector.

5. The default response from the HTTP Request action is "202 Accepted", which is returned after the request is received, before the Flow has completed, so the caller does not receive feedback about success or failure.

You can add a Response action at the end of the Flow, to return a custom response to the caller. And you can even add Try / Catch actions in your Flow to return success / failure responses.

The problem with using a Response at the end of the Flow is the delay that this introduces in the web service call. If your Flow takes several seconds to run and complete, the response will take that long to be returned to the caller.

Due to this processing delay, the Flow web service may not be the best option for a highly synchronous web service where a fast response is required.

6. While Flow does have some error handling options, they are not as comprehensive as what can be provided with a custom ASP.NET Core web service or an Azure Function, where you can write complex code to handle errors, validation, etc.

7. Some companies may not be comfortable with authentication based on the URL only. It may be possible to add an additional layer of authentication in the Flow, such as using a value in the JSON to authenticate against a Flow variable or a SQL record, but the customer will need to be clear on what they are comfortable with for security.

But even with these caveats, the ability to create a zero code custom web service in just a few minutes is incredibly valuable.

Steve Endow is a Microsoft MVP in

Los Angeles. He is the owner of Precipio Services, which provides

Dynamics GP integrations, customizations, and automation solutions.

No comments:

Post a Comment

All comments must be reviewed and approved before being published. Your comment will not appear immediately.